Over-Engineered on Purpose — Part 8: The Common Module, Auto-Configuration, and Making gRPC Authentication Invisible

This is Part 8 of a series where I'm building a microservice platform from scratch. Part 7 covers the authorization server and OAuth2. Full codebase on GitHub.

After setting up the auth server in Part 7, the next step was obvious: make the tokens actually flow through gRPC calls. So I built a client interceptor on the BFF to attach tokens to outgoing requests, and a server interceptor on the Catalog Service to validate them on the way in.

It worked. Then I needed the same interceptors on the Booking Service. Same code. Copy, paste. Then the User Service needed the server interceptor. Copy, paste. Then I realized the token resolution logic — deciding between user tokens and service tokens — was also being duplicated. And the GrpcChannelFactory with the Eureka name resolver from Part 5. And the pagination helper. And the exception mapping.

I was building a monolith of duplicated code across my microservices. The irony wasn't lost on me.

So I created a common module. And that simple decision — "move the shared stuff to one place" — turned into one of the deepest Spring learning experiences of this entire project. Because moving code is easy. Making it wire itself up automatically in every service that depends on it? That's auto-configuration. And auto-configuration is a whole world.

What Lives in Common

Before getting into how it works, here's what ended up in the module:

common/src/main/java/com/rentitup/common/

├── grpc/

│ ├── GrpcAutoConfiguration

│ ├── GrpcChannelFactory

│ ├── EurekaNameResolver

│ ├── EurekaNameResolverProvider

│ ├── GrpcExceptionHandler

│ ├── client/

│ │ ├── @GrpcClient annotation

│ │ ├── GrpcClientBeanPostProcessor

│ │ ├── BearerTokenInterceptor

│ │ ├── TokenResolver

│ │ ├── GrpcContextTokenSupplier

│ │ ├── ServiceTokenProvider

│ │ └── auto-configuration classes

│ └── server/

│ ├── GrpcAuthInterceptor

│ ├── GrpcAuthContext

│ └── auto-configuration classes

├── exceptions/

│ ├── NotFoundException → NOT_FOUND

│ ├── BadRequestException → INVALID_ARGUMENT

│ ├── ConflictException → ALREADY_EXISTS

│ ├── UnauthorizedException → UNAUTHENTICATED

│ ├── ForbiddenException → PERMISSION_DENIED

│ └── ServiceUnavailableException → UNAVAILABLE

├── security/

│ └── SecurityUtils

└── util/

└── PaginationHelperAny service that adds implementation(project(":common")) to its Gradle build gets all of this — gRPC client stub injection, token propagation, server-side authentication, exception handling, security utilities — wired up automatically. No manual bean registration. No copy-pasting interceptors.

That's the goal. Getting there was the journey.

Learning Auto-Configuration

In a normal Spring application, you annotate classes with @Configuration and @Bean and Spring picks them up through component scanning. But the common module isn't part of any service's component scan path — it's an external library on the classpath. Spring doesn't scan libraries. You have to tell it what to load.

This is what auto-configuration is for. You create classes annotated with @AutoConfiguration and register them in a file called META-INF/spring/org.springframework.boot.autoconfigure.AutoConfiguration.imports. When Spring Boot starts, it reads this file and conditionally loads the beans.

The "conditionally" part is where it gets interesting. Different services need different beans:

- •

The BFF needs the client interceptor and token resolver with both user token and service token support, but doesn't need the server interceptor (it's not a gRPC server)

- •

The Catalog Service needs the server interceptor and its own client interceptor (for calling User Service), plus a service token provider

- •

The User Service only receives gRPC calls — it needs the server interceptor but no service token provider since it doesn't call other services

One module, multiple configurations, all conditional. Spring provides the tools for this: @ConditionalOnBean, @ConditionalOnProperty, @ConditionalOnMissingBean, @ConditionalOnClass. You declare conditions, and Spring evaluates them to decide which beans to create.

My auto-configuration loads in five stages:

1. GrpcAutoConfiguration → GrpcChannelFactory (needs Eureka)

2. GrpcTokenAutoConfiguration → TokenResolver, ServiceTokenProvider

3. GrpcClientsAutoConfiguration → BearerTokenInterceptor, BeanPostProcessor

4. GrpcSecurityConfig → permitAll on Spring's gRPC security

5. GrpcAuthAutoConfiguration → GrpcAuthInterceptor (needs JwtDecoder)Each stage depends on the one before it, enforced with @AutoConfiguration(after = ...). The token config only creates a ServiceTokenProvider if OAuth2 client credentials are configured. The auth interceptor only appears if a JwtDecoder bean exists (which it does when the service has spring.security.oauth2.resourceserver.jwt properties). The security config only loads if GrpcSecurity is on the classpath.

The result: each service gets exactly the beans it needs based on its configuration, without declaring any of them explicitly.

Building @GrpcClient

One thing I noticed in the net.devh gRPC starter (the library I chose not to use in Part 5) was a @GrpcClient annotation. You annotate a stub field, and the library injects it automatically. Clean, no boilerplate.

I wanted the same thing. In my services, I wanted to write:

@Service

public class CatalogController {

@GrpcClient("CATALOG-SERVICE")

private CatalogServiceGrpc.CatalogServiceBlockingStub catalogStub;

@GetMapping("/machines")

public ResponseEntity<?> getMachines() {

// Just use the stub — channel, interceptors, everything wired

var response = catalogStub.listMachines(request);

return ResponseEntity.ok(response);

}

}No manual channel creation. No stub factory. The service name matches the Eureka registration, and everything else is handled.

To make this work, I built a GrpcClientBeanPostProcessor. A BeanPostProcessor is a Spring hook that lets you intercept beans during creation — before they're fully initialized. My processor scans every bean for fields annotated with @GrpcClient, and for each one:

- •

Extracts the service name from the annotation

- •

Gets or creates a channel from

GrpcChannelFactory(targeting

eureka:///SERVICE-NAMEwith round-robin load balancing)

- •

Detects the stub type from the class name —

BlockingStub,

FutureStub, or

Stub(async)

- •

Calls the matching factory method via reflection (

newBlockingStub(channel))

- •

Injects the stub into the field

Channels are cached per service — multiple fields pointing to CATALOG-SERVICE share one channel. And because the GrpcChannelFactory applies interceptors when creating channels, every stub automatically gets the BearerTokenInterceptor. Authentication is invisible to the service code.

This was where I used AI most heavily. I described the pattern — "I want an annotation that triggers stub injection through a BeanPostProcessor" — and used Claude to help scaffold the implementation. The reflection-based stub detection, the factory method lookup, the field injection — I understood what needed to happen but not all the Spring internals to make it happen. I don't fully understand every line of the BeanPostProcessor. But I understand the architecture, and when it broke (which it did, repeatedly), I could debug it because I knew what each piece was supposed to do.

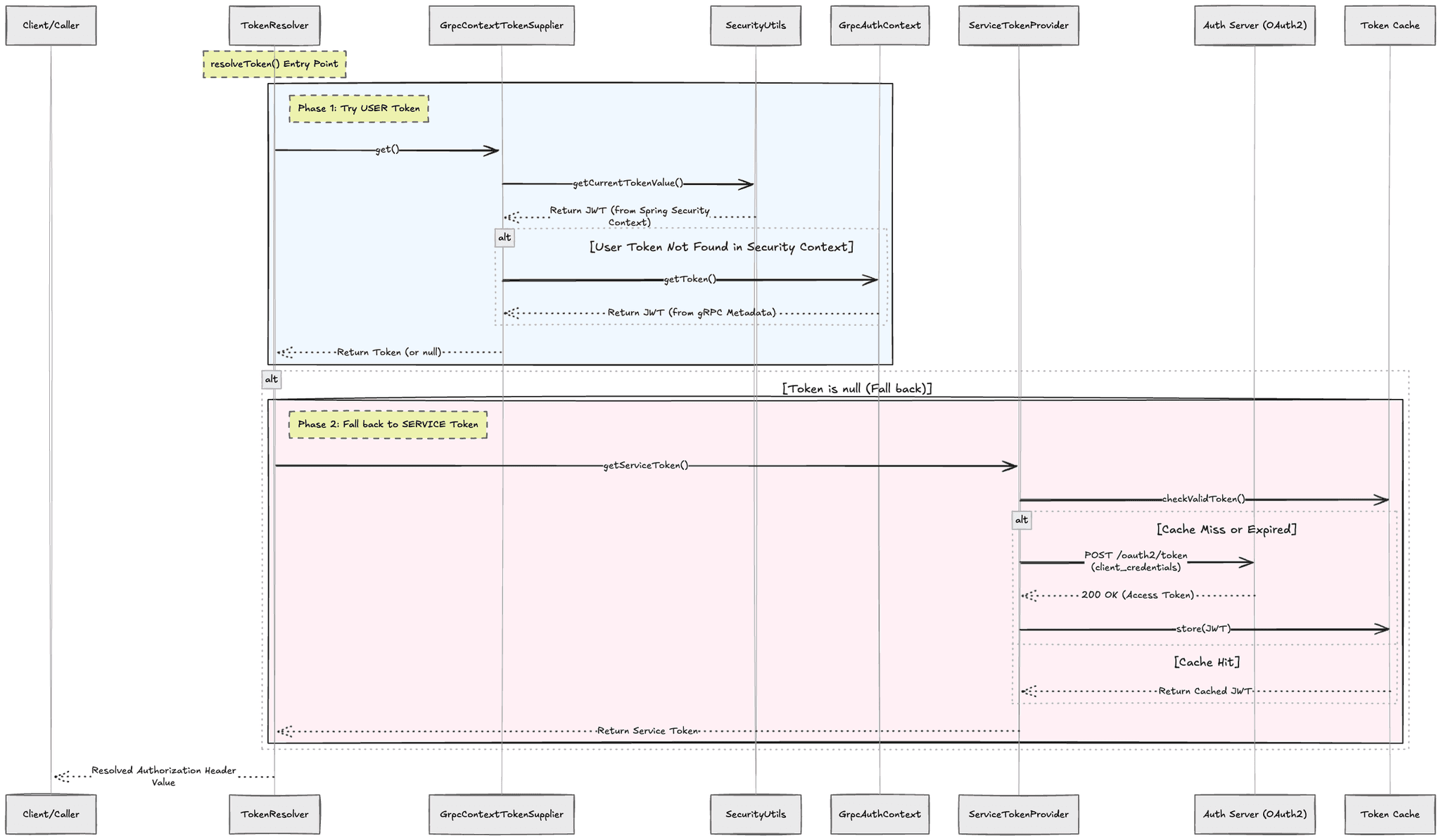

The Token Resolution Chain

With @GrpcClient handling stub injection, the next piece is making sure every outgoing call carries the right token. This is the problem from the intro — which token do I send?

The TokenResolver uses a two-level strategy:

The GrpcContextTokenSupplier checks two places because tokens can come from two worlds. If the call originates from the BFF handling an HTTP request, the user's JWT is in Spring's SecurityContextHolder. If the call originates from a gRPC service that received a forwarded token, it's in gRPC's Context — because gRPC uses its own thread model, and Spring's thread-local storage doesn't propagate into gRPC threads.

This dual-context support is subtle but critical. Without it, token forwarding through service chains breaks. The Booking Service receives a user token via gRPC, stores it in GrpcAuthContext, then when it calls the Catalog Service, the GrpcContextTokenSupplier finds it there and the BearerTokenInterceptor forwards it.

The ServiceTokenProvider is the fallback. It calls the auth server's token endpoint with the service's client credentials, gets a service JWT, and caches it with a refresh buffer before expiration. Background jobs, scheduled tasks, startup operations — anything without a user context — automatically gets a service token.

The BearerTokenInterceptor ties it together. When I first read the Spring gRPC docs on how to add a client interceptor, it looked simple — slap @GlobalClientInterceptor on the bean and it applies to all outgoing calls. Done.

Except it didn't work for me. Remember, back in Part 5 I built a custom GrpcChannelFactory with the Eureka name resolver and round-robin load balancing. That means I'm creating channels manually with ManagedChannelBuilder, not using Spring gRPC's default channel management. The @GlobalClientInterceptor annotation works with Spring's managed channels — not mine.

So I had to wire it manually. The GrpcChannelFactory accepts an ObjectProvider<ClientInterceptor>, resolves the interceptors lazily (to avoid circular dependency issues), and applies them when building each channel:

ManagedChannelBuilder<?> builder = ManagedChannelBuilder

.forTarget("eureka:///" + serviceName)

.defaultLoadBalancingPolicy("round_robin")

.usePlaintext();

List<ClientInterceptor> interceptors = getInterceptors();

if (!interceptors.isEmpty()) {

builder.intercept(interceptors);

}Now every channel created through the factory automatically includes the BearerTokenInterceptor. The interceptor calls TokenResolver.resolveToken() and attaches whatever token it gets to the gRPC metadata:

The Server Side: Validating and Extracting

On the receiving end, GrpcAuthInterceptor runs as a @GlobalServerInterceptor on every incoming call. It:

- •

Checks if the method is public (configurable per service in

application.yml) - •

Extracts the

Authorization: Bearerheader from metadata - •

Validates the JWT using Spring's

JwtDecoder(which uses the JWKS public key from Part 7) - •

Populates

GrpcAuthContextwith the token's claims

The context population differs by token type: User tokens → user_id, email, role, token_type: USERService tokens → client_id, token_type: SERVICE

Service code accesses this through GrpcAuthContext:

public void createBooking(CreateBookingRequest request, ...) {

// Who made this request?

String userId = GrpcAuthContext.requireUserId();

String email = GrpcAuthContext.getUserEmail();

boolean isAdmin = GrpcAuthContext.hasRole("ADMIN");

// Was this called by a user or another service?

if (GrpcAuthContext.isServiceToken()) {

String callingService = GrpcAuthContext.getClientId();

}

}Public methods are configured per service:

# user-service

grpc:

auth:

public-methods:

- "rentitup.user.UserService/CreateUser"

- "rentitup.user.UserService/GetUserByEmail"CreateUser is public because it's called during registration (no token yet). GetUserByEmail is public because the auth server calls it during login to verify credentials — before any tokens exist.

Per-Service Configuration

Different services have different OAuth2 needs. The BFF has two registrations — one for user login (Authorization Code flow) and one for service-to-service calls (Client Credentials). Backend services typically have one registration for service-to-service calls. The User Service has none — it only receives calls, never makes authenticated outgoing ones.

The ServiceTokenProvider needs to know which OAuth2 registration to use. This is configurable:

# BFF — uses 'bff-service' registration for S2S tokens

grpc:

client:

oauth2-registration: bff-service

# Catalog Service — uses default 'auth-server' registration

# No config needed, 'auth-server' is the defaultThe auto-configuration detects which registrations exist and creates the appropriate beans. If no OAuth2 client registration exists (like in the User Service), no ServiceTokenProvider is created, and the TokenResolver runs in user-only mode — it only forwards existing tokens, never requests new ones.

The Debugging That Made It Work

This whole infrastructure didn't work on the first try. Or the second. Some of the problems I hit:

The @GrpcClient stub was null. The BeanPostProcessor was running before the GrpcChannelFactory existed because post-processors are created very early in Spring's lifecycle. The fix: ObjectProvider<GrpcChannelFactory> for lazy resolution — the factory gets looked up when the first stub is actually injected, not when the processor is created.

// WRONG — factory might not exist yet

public GrpcClientBeanPostProcessor(GrpcChannelFactory factory)

// RIGHT — lazy lookup when needed

public GrpcClientBeanPostProcessor(

ObjectProvider<GrpcChannelFactory> factoryProvider)Interceptors silently not registering. I was using @ConditionalOnBean(TokenResolver.class) on the BearerTokenInterceptor bean. But @ConditionalOnBean checks happen before the bean that creates TokenResolver has run. The condition evaluated to false, and the interceptor silently didn't register. No error. Just missing authentication on every call.

The fix: remove @ConditionalOnBean and rely on Spring's constructor injection instead. If TokenResolver is a parameter of the interceptor's @Bean method, Spring naturally waits for it to be available.

// WRONG — condition checked too early, bean skipped

@Bean

@ConditionalOnBean(TokenResolver.class)

public BearerTokenInterceptor interceptor(TokenResolver r) { ... }

// RIGHT — Spring DI handles the dependency

@Bean

public BearerTokenInterceptor interceptor(TokenResolver r) { ... }Each of these bugs taught me something about Spring's lifecycle that I wouldn't have learned any other way. The auto-configuration ordering, the BeanPostProcessor timing, the ObjectProvider pattern, the difference between conditional checks and dependency injection — this is the deep Spring knowledge that separates "I use Spring" from "I understand Spring."

Tokens in the Wild: Three Real Scenarios

Let me show how the infrastructure handles three real scenarios in the system. Same interceptor chain, three different token strategies.

Scenario 1: User books a machine

A user clicks "Book Now" in the frontend. The BFF has their JWT from the OAuth2 session. The BearerTokenInterceptorfinds the user token in Spring's security context and forwards it through every gRPC call in the chain — BFF → Booking Service → Catalog Service → User Service. The user's identity travels with the request.

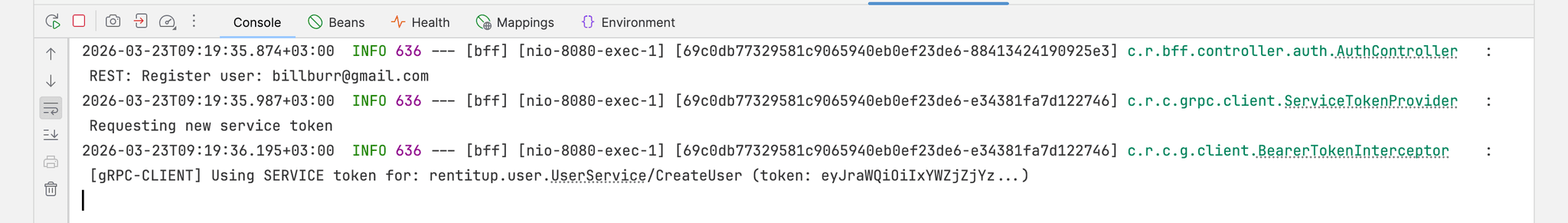

Scenario 2: A new user registers

Here's one that's less obvious. A new user fills in the registration form. The frontend sends it to the BFF. But this user doesn't have a JWT yet — they're not logged in. They don't even exist in the system yet.

The BFF still needs to make a gRPC call to the User Service to create the account. And the User Service requires authentication on most methods — we're running a zero-trust setup where no service accepts unauthenticated calls.

So what happens? The TokenResolver finds no user token (there is no user). It falls back to the ServiceTokenProvider, which calls the auth server with the BFF's client credentials and gets a service token. The BFF authenticates itself as bff-service to create the user on the user's behalf.

This is why the BFF has two OAuth2 registrations — bff-gateway for user login (Authorization Code flow) and bff-service for service-to-service calls (Client Credentials). Different flows, different tokens, same service.

Scenario 3: The 2 AM cron job

The Catalog Service has scheduled jobs that run overnight:

@Slf4j

public class CatalogJob {

private final CatalogServices catalogServices;

@Scheduled(cron = "${cron.update-ratings:0 0 2 * * *}")

public void updateMachineRatings() {

log.info("=== Starting machine rating update job ===");

try {

catalogServices.updateMachineRatings();

} catch (Exception e) {

log.error("=== Machine rating update job FAILED ===", e);

}

}

@Scheduled(cron = "${cron.maintenance-reminder:0 0 8 * * *}")

public void sendMaintenanceReminders() {

log.info("=== Starting maintenance reminder job ===");

try {

catalogServices.sendUpcomingMaintenanceEmails();

} catch (Exception e) {

log.error("=== Maintenance reminder job FAILED ===", e);

}

}

}At 2 AM, updateMachineRatings runs. It needs to call the Booking Service to get review data. At 8 AM, sendMaintenanceReminders needs to call the User Service to get owner email addresses, then the Notification Service to send emails.

There's no user. There's no HTTP request. There's no session. It's a scheduler thread firing at a cron time.

The TokenResolver finds no user token. The ServiceTokenProvider kicks in — calls the auth server with catalog-service client credentials, gets a service token scoped to internal,user:read, caches it. The gRPC calls go out with the service token. The receiving services validate it, see it's from catalog-service with the right scopes, and respond.

No special code in the cron job. No "if it's a scheduled task, do auth differently." The same infrastructure handles it. The service just calls other services and the interceptor chain figures out the authentication.

Zero Trust and Where This Leads

There's a principle running through all of this that I want to name explicitly: zero trust.

No service in the system accepts an unauthenticated gRPC call (except for explicitly configured public methods like user registration). The Catalog Service doesn't trust the Booking Service just because they're on the same network. The User Service doesn't trust the BFF just because it's the gateway. Every call carries a token. Every token is verified against the auth server's public key.

This is application-level security — Layer 7. We're verifying identity and authorization on every request through JWT validation.

But there's a layer below this that we're not protecting: the transport layer. Our gRPC calls are running with usePlaintext() — no TLS. If someone intercepted the network traffic between services, they could read the tokens and the data.

In production, you'd want mTLS (mutual TLS) — where both the client and server present certificates, and the connection itself is encrypted and authenticated. But setting up certificates for each service, managing rotation, handling certificate authorities — that's a significant operational burden.

While researching how to secure the transport layer and watching talks on gRPC security, I kept running into the concept of a service mesh. Tools like Istio and Envoy take all the cross-cutting concerns we've been building — mTLS, load balancing, observability, retries — and move them out of the application code entirely into a sidecar proxy or control plane. Instead of each service managing its own certificates and TLS, the mesh handles it transparently.

I fell into another rabbit hole. The xDS protocol (a set of discovery services — cluster discovery, endpoint discovery, route discovery), how Envoy proxies work as sidecars, and something particularly interesting — proxyless gRPC. This is where gRPC implements xDS internally, so instead of routing traffic through a sidecar proxy, gRPC itself talks to the control plane directly. No extra hop. No sidecar. The service mesh features are built into the transport layer.

But from everything I've been reading and watching, this world is heavily rooted in Kubernetes. The xDS protocol talks about cluster discovery services — and "cluster" means something specific in Kubernetes. Service endpoints, pod networking, ingress controllers — these are Kubernetes concepts that the service mesh is built on top of. I'm not familiar with Kubernetes yet. Going into service mesh without understanding the infrastructure it runs on would be jumping the gun. It would be like trying to understand gRPC load balancing without understanding how channels work — which, if you've been following this series, you know I already tried and it didn't go well.

So the plan is: finish the project, learn Kubernetes properly so that when xDS talks about cluster discovery I actually have a concept of what a cluster means, and then revisit this. Move to a service mesh setup, maybe experiment with proxyless gRPC. That's a future series, not this one.

For now, the application-level zero trust we have works. Knowing that this next layer exists and roughly how it fits together is enough.

The End Result

A service that depends on common and has the right YAML configuration gets everything automatically:

@Service

public class BookingServiceImpl {

@GrpcClient("CATALOG-SERVICE")

private CatalogServiceGrpc.CatalogServiceBlockingStub catalogStub;

@GrpcClient("USER-SERVICE")

private UserServiceGrpc.UserServiceBlockingStub userStub;

public void createBooking(CreateBookingRequest request) {

String userId = GrpcAuthContext.requireUserId();

// These calls are automatically authenticated

var machine = catalogStub.getMachine(...);

var owner = userStub.getUser(...);

// Business logic — no auth code anywhere

}

}No interceptor setup. No token resolution code. No channel configuration. Whether the request came from a user clicking a button or a cron job firing at 2 AM, the infrastructure handles it. The service just does its job.

What I Learned

The common module is the piece of this project I'm most proud of and least confident about simultaneously. It works. Services depend on it and authentication flows correctly. But the auto-configuration internals — the conditional bean creation, the lifecycle ordering, the lazy resolution patterns — I understand them well enough to build and debug, not well enough to teach.

And I think that's fine. This is what breadth-first learning looks like in practice. I went deep enough to build the thing and deep enough to fix it when it broke. The deeper understanding will come as I use it more, hit more edge cases, and read more about Spring's internals.

What I can say is this: building a shared library with auto-configuration taught me more about how Spring Boot actually works than years of just using it. When you're the one registering beans conditionally, you start to understand why Spring's own auto-configuration is structured the way it is. You stop seeing it as magic and start seeing it as a system of rules.