Over-Engineered on Purpose — Part 9: Centralized Configuration, Distributed Tracing, and a Chicken-and-Egg Problem

This is Part 9 of a series where I'm building a microservice platform from scratch. Part 8 covers the common module and gRPC token infrastructure. Full codebase on GitHub.

I was adding the Zipkin sampling rate to the Catalog Service when I stopped and counted. Four microservices. Each one has its own application.yml. Each one needs the same Zipkin config, the same Eureka URL, the same auth server URL, the same gRPC metadata settings. If I need to change the sampling rate from 1.0 to 0.5, I have to edit four files, commit four changes, and restart four services.

This doesn't scale. This is the kind of problem that's fine when you have two services and becomes unmanageable at ten. And the solution has existed in the Spring Cloud ecosystem for years: a config server.

The idea is simple. All your service configurations live in a Git repository. A dedicated Config Server service reads that repository and serves configurations to your microservices on startup. Change a property in Git, and services pick up the new value — some even without restarting.

Simple idea. The implementation had a twist I didn't see coming.

Setting Up the Config Server

Spring Cloud Config Server is a new module in the monorepo. It reads configuration from a Git repository and serves it over HTTP. Services request their config by application name — catalog-service gets catalog-service.yml, user-service gets user-service.yml. A shared application.yml in the repo applies to all services.

This is where I moved the common properties. The Zipkin sampling rate, the Eureka connection settings, the auth server URLs — anything shared goes in the common application.yml. Service-specific properties like database URLs and gRPC port numbers stay in the service-specific files.

The Config Server itself is straightforward:

spring:

application:

name: config-server

cloud:

config:

server:

git:

uri: https://github.com/markmumba/rentitup-config

username: ${GITHUB_USERNAME}

password: ${GITHUB_PASSWORD}Since the config repo contains secrets (database passwords, client secrets), it's a private repository. The Config Server authenticates with a read-only token. On the service side, each microservice declares where to find its config:

spring:

application:

name: catalog-service

config:

import: "configserver:"

cloud:

config:

discovery:

enabled: true

service-id: config-serverNotice what's happening here. The service isn't pointing to a URL like http://localhost:8087. It's saying "find the config server through service discovery" — through Eureka.

This is cleaner. The service doesn't need to know the Config Server's address. It asks Eureka, gets the address, and pulls its config. If the Config Server moves to a different port or host, no service configs need updating.

And this is exactly where the chicken-and-egg problem shows up.

The Chicken and the Egg

Think about what happens when the Catalog Service starts up.

- •

It needs its configuration (database URLs, gRPC ports, auth server addresses)

- •

Its configuration lives on the Config Server

- •

To find the Config Server, it asks Eureka

- •

To connect to Eureka, it needs the Eureka URL

- •

The Eureka URL is in its configuration

- •

Its configuration lives on the Config Server

- •

Go to step 3

The service needs its config to find Eureka. It needs Eureka to find the Config Server. It needs the Config Server to get its config.

If I'd left Eureka on its default port (8761), this might have worked by accident — Spring Cloud has default fallbacks. But I changed Eureka's port to 8081, and that setting was in the Config Server's Git repo. The service couldn't find Eureka because it didn't know the port, and it couldn't get the port because it couldn't find the Config Server, because it couldn't find Eureka.

The fix is a minimal local bootstrap config. Each service keeps just enough configuration locally to find Eureka:

# Local application.yml — just enough to bootstrap

spring:

application:

name: catalog-service

config:

import: "configserver:"

cloud:

config:

discovery:

enabled: true

service-id: config-server

eureka:

client:

service-url:

defaultZone: ${EUREKA_URL:http://localhost:8081/eureka}

fetch-registry: true

register-with-eureka: trueWith the Eureka URL available locally, the service can find Eureka → discover the Config Server → pull the rest of its configuration. The Config Server's version of the config takes precedence over local values, so any property defined in both places uses the remote version. The precedence order:

local application.yml < config server properties < env vars / command line argsThe service registry itself is the exception — it doesn't use the Config Server. It has all its configuration locally because it needs to be the first thing that's up and running. It's the foundation everything else bootstraps from.

One thing that caught me: I had a config file in the Git repo named catalog-service without the .yml extension. The Config Server couldn't match it to the service. When I fixed the filename and pushed, I only had to restart the Catalog Service — not the Config Server. The Config Server reads from Git on each request, so it picks up changes automatically.

Why I Needed This Now: Distributed Tracing

The Config Server wasn't just about cleanliness. It solved an immediate problem.

I was debugging a booking flow that spans the BFF → Booking Service → Catalog Service → User Service. Something was failing, and I was checking logs in four terminal windows, trying to correlate timestamps manually, guessing which service the error originated in.

This is the fundamental debugging problem in microservices. A single user request touches multiple services, and the logs are scattered across all of them. You need a way to trace a request through the entire system and see where it slowed down or failed.

Zipkin is a distributed tracing system. It collects timing data from your services and visualizes the entire request chain in one view. Each request gets a trace ID that follows it through every service. Within a trace, each service call creates a span with timing data.

Setting it up with Spring Boot is mostly about dependencies and configuration. Add the Micrometer tracing and Zipkin reporter dependencies (which I added to the microservice-conventions plugin in buildSrc so all services get them), and configure the sampling rate:

management:

tracing:

sampling:

probability: 0.7A sampling probability of 1.0 means every request is traced. In production you'd lower this — tracing adds overhead, and at high traffic you don't need every request, just a representative sample. But for development, seeing everything is more useful.

This is the config I was adding to each service manually when I realized I needed the Config Server. Now it lives in the shared application.yml in the Git repo. One place. All services inherit it. When I want to change the sampling rate, I change one file.

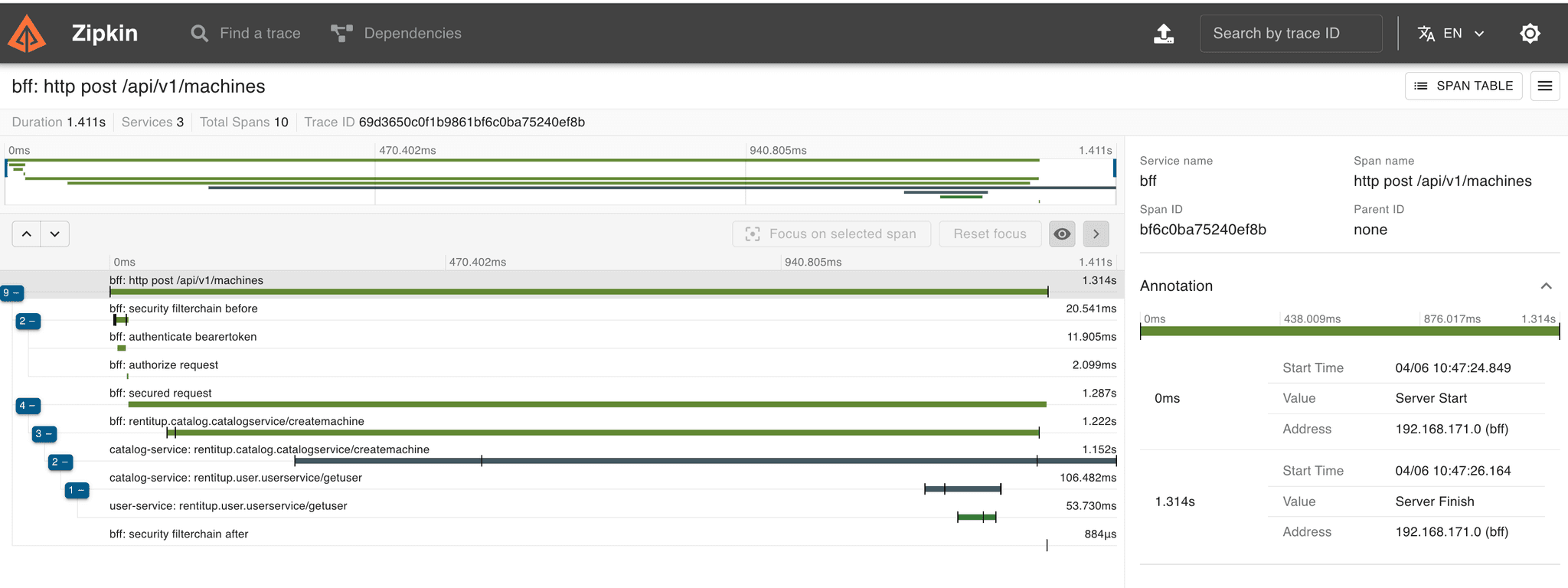

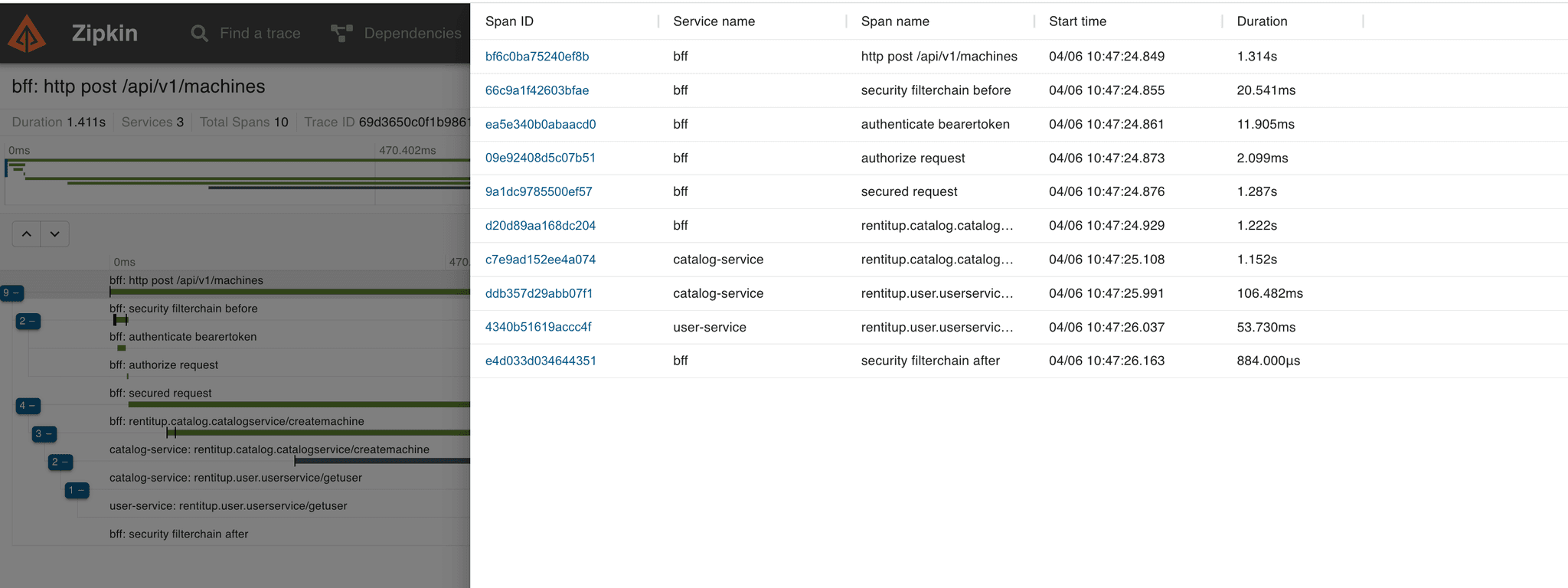

With Zipkin running, I can see the entire request chain:

Where did the request spend its time? Is the Catalog Service slow? Is there a network bottleneck between Booking and User? Zipkin answers these questions in one view instead of four terminal windows.

The sampling rate tradeoff is worth thinking about. Too high and you're burning bandwidth and storage on telemetry for every single request. Too low and you miss the intermittent bugs — the ones that happen on 1 in 100 requests and drive you crazy because you can never reproduce them. There's no universal right answer. Start at 1.0 in development, drop it as you move toward production and understand your traffic patterns.

Profile-Specific Configuration

One more thing the Config Server enables: environment-specific config. You can have catalog-service.yml for default settings and catalog-service-prod.yml for production overrides. When the service's active profile is set to prod, the Config Server serves both files, with the profile-specific one taking precedence.

I'm not using this yet — everything is running locally in development mode. But the structure is there for when deployment becomes relevant. Database URLs, auth server endpoints, sampling rates, connection pool sizes — all the things that differ between environments can be managed per profile without touching application code.

What These Two Pieces Solve

The Config Server and distributed tracing are both infrastructure. They don't add features that users see. But they solve problems that get worse the more services you have:

Config Server solves the configuration drift problem. One source of truth for shared properties. Change once, apply everywhere. No more "I updated the sampling rate in three services but forgot the fourth."

Distributed tracing solves the observability problem. When a request touches four services, you need to see the full chain. Where did it fail? Where did it slow down? Which service is the bottleneck?

Together with the service registry (Eureka), the authorization server, and the common module from Part 8, these are the infrastructure pieces that make a microservice architecture actually manageable. Without them, you're just running four separate applications and pretending it's a system.

What's Next

At this point, RentItUp has all the core infrastructure in place. Service discovery, gRPC communication with load balancing, centralized security with an authorization server, a shared common module with auto-configuration, distributed tracing, and centralized configuration.

Next we will be adding caching to the system. Here we will try something different from the norm.